A group of researchers from leading US and European universities has published the results of a large-scale experiment showing that modern large language models, such as GPT-4, can de-anonymize text authors with accuracy significantly exceeding previous methods. In the study, the models analyzed stylistic 'fingerprints' in written language—unique combinations of vocabulary, syntax, and rhythm. On a dataset of tens of thousands of posts from platforms like Reddit and Twitter, the AI successfully matched anonymous messages to the real author profiles, in some cases achieving accuracy above 90%. The work, a preprint of which appeared in open access earlier this month, has not yet undergone full peer review but has already caused a stir in the scientific and IT communities.

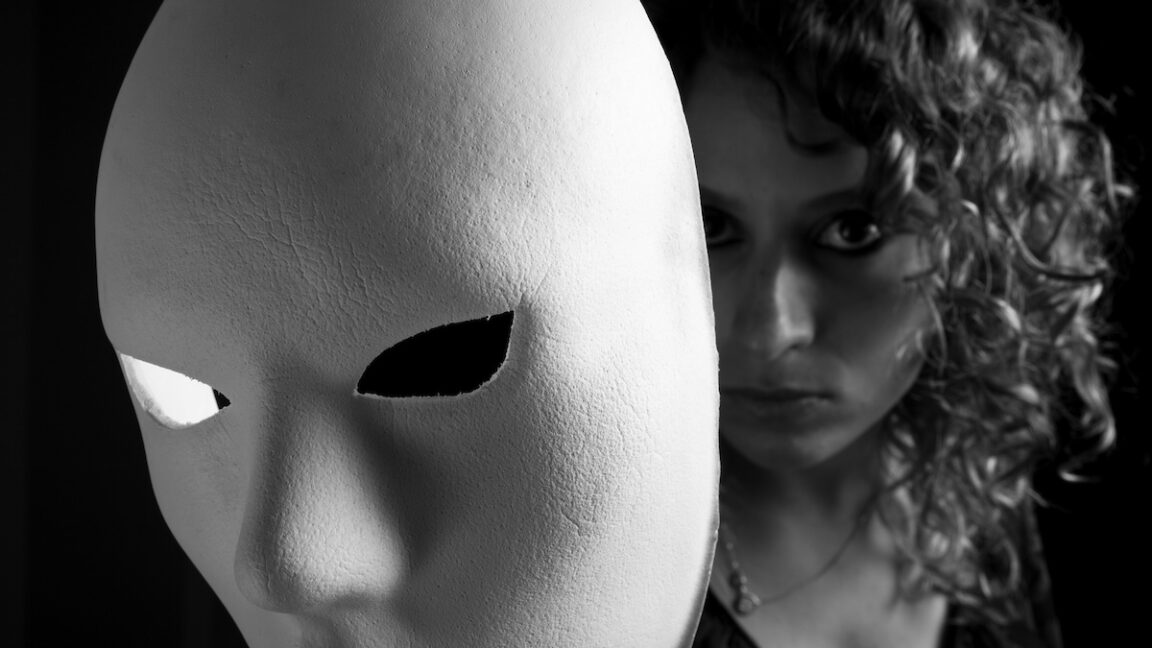

The context of this work is critically important for understanding digital privacy. For decades, pseudonyms and avatars were considered a reliable shield, allowing users to freely express opinions, explore their identity, or discuss sensitive topics without fear of reprisal or discrimination. Anonymity underpins many online communities, investigative journalism, and political activism. However, the development of AI, especially models trained on colossal volumes of text data, has created a qualitatively new threat. While de-anonymization previously required manual analysis or specialized algorithms for a specific author, LLMs can now do this en masse and automatically, detecting patterns not obvious even to the human eye.

Technically, the researchers' method is based on the ability of LLMs to form high-level semantic-stylistic representations of text. The model first 'reads' known texts by the target author, extracting stable patterns: favorite words, sentence length, argument structure, even characteristic errors. This digital 'style profile' is then used to analyze anonymous text. The key difference from old methods is that LLMs do not just search for keywords but understand the context and subtle nuances of the author's style. Several models participated in the experiment, including commercial and open-source ones. The most effective were the largest and most modern ones, indicating a direct link between model power and its de-anonymization capability.

The reaction from cybersecurity and digital rights experts was immediate and alarming. Specialists call the research compelling evidence of the erosion of anonymity in the AI era. 'This is not a theoretical threat, but a working tool that corporations can use for data collection today, and repressive regimes can use to find dissenters,' stated one analyst. Representatives of LLM developer companies have not yet given official comments, but according to sources, internal discussions on ethical frameworks and potential limitations of such use of their technologies have begun in the industry. Critics also point out that the research could become a manual for malicious actors, although the authors intentionally omitted some technical details to avoid this.

For the industry, this means an impending crisis of trust. Platforms positioning anonymity as a key feature may face a mass exodus of users. Business models based on the collection and analysis of 'depersonalized' data will be at risk—they can now be re-linked to a specific person. For ordinary users, the consequences are even more serious: the safety of dissidents, investigative journalists, corporate whistleblowers, and even people running sensitive blogs under pseudonyms is threatened. The right to anonymous expression, fundamental to a free internet, is being called into question.

The prospects now depend on a race between technology and regulation. On one hand, de-anonymization methods will develop and improve, possibly being integrated into commercial services. On the other, active work will begin on countermeasures: AI tools for masking stylistic 'handwriting' that will automatically rewrite text, preserving meaning but changing style. Lawyers predict a wave of new legislation restricting the use of AI for de-anonymization without a court order. However, the global nature of the internet makes any regulation extremely difficult. The main question remains open: can we preserve digital anonymity in principle, or will it inevitably become a relic of the pre-robot era?

No comments yet. Be the first!