Fabi.ai

Combines SQL, Python, and AI automation in a collaborative environment to conquer complex data analyses.

Are you the owner?

Claim this tool to publish updates, news and respond to users.

Sign in to claim ownership

Sign InDescription

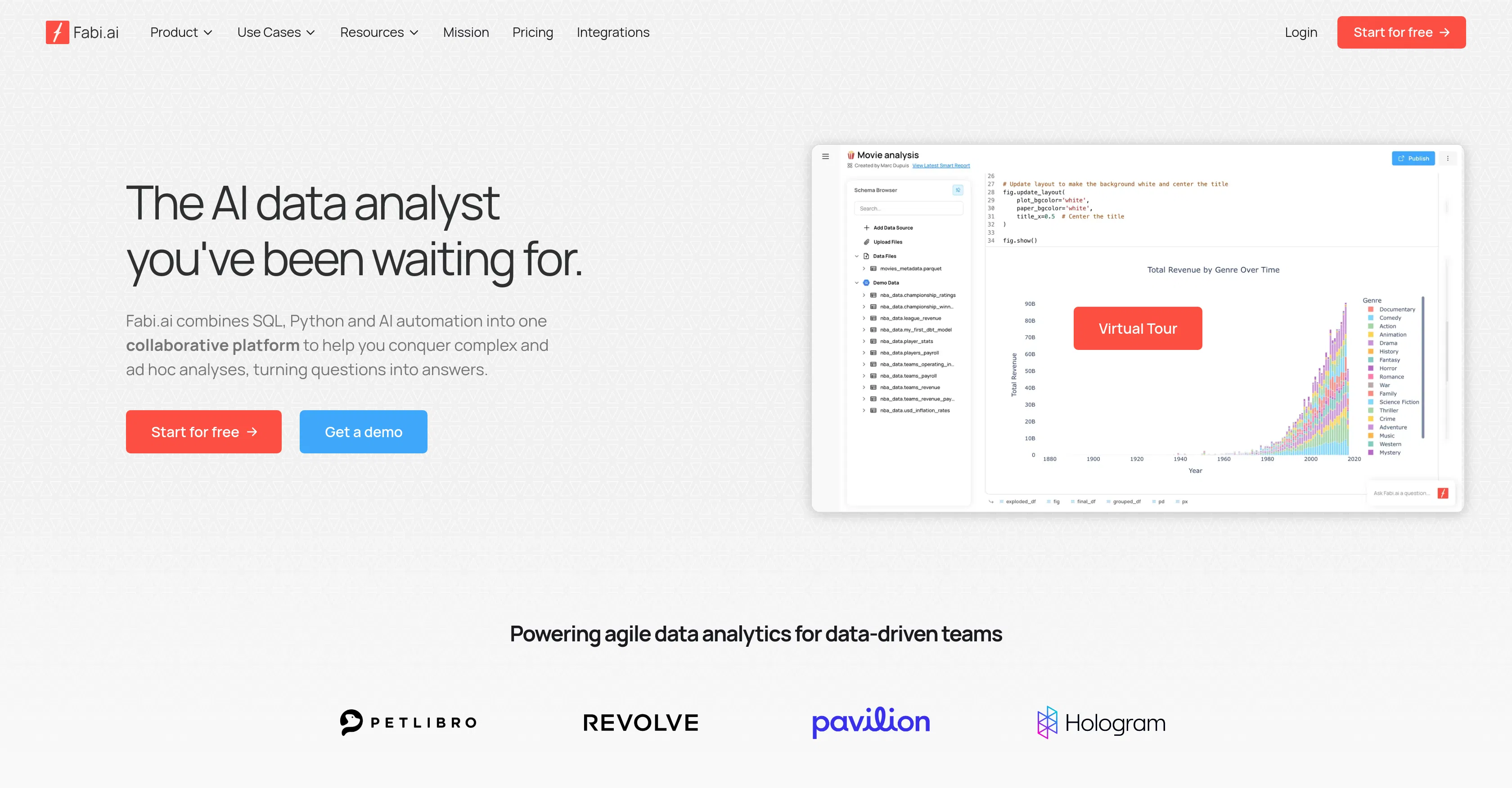

Fabi.ai is an AI-powered data analysis platform created to unify the workflows of data professionals by integrating SQL, Python, and AI-driven automation into a single collaborative environment. Its core value lies in dramatically accelerating the process of transforming raw data queries into actionable insights, enabling data-driven teams to tackle complex and ad hoc analyses with unprecedented agility. The platform is designed to bridge the gap between data extraction and business intelligence, making advanced analytics accessible and efficient.

Key features: The platform allows users to write and execute SQL queries directly within a notebook-style interface, seamlessly blending them with Python code for advanced data manipulation and machine learning tasks. It incorporates AI automation to suggest query optimizations, generate code snippets, and explain complex results in plain language. Real-time collaboration tools enable multiple team members to work on the same analysis simultaneously, with version control and commenting. Additionally, it offers one-click visualization generation from query results and the ability to schedule automated reports and data pipelines.

What makes Fabi.ai unique is its holistic approach to the data analysis lifecycle, removing the need to context-switch between disparate tools like database clients, Jupyter notebooks, and BI dashboards. It is a cloud-native platform with a focus on security and scalability, offering integrations with major data warehouses (e.g., Snowflake, BigQuery, Redshift) and business applications via API. The underlying AI is fine-tuned for data work, capable of understanding data schemas and context to provide relevant assistance, which distinguishes it from generic code assistants.

Ideal for data analysts, data scientists, and business intelligence teams in mid-to-large-sized organizations that require rapid, iterative exploration of data. Specific use cases include building and sharing interactive data dashboards for stakeholders, automating monthly sales performance reports, conducting deep-dive exploratory analysis for product feature validation, and creating reproducible data pipelines for machine learning model training. It is particularly valuable for teams that need to democratize data access while maintaining governance and technical rigor.