HoneyHive

Provides a platform for safe deployment and monitoring of language models in production.

Are you the owner?

Claim this tool to publish updates, news and respond to users.

Sign in to claim ownership

Sign InDescription

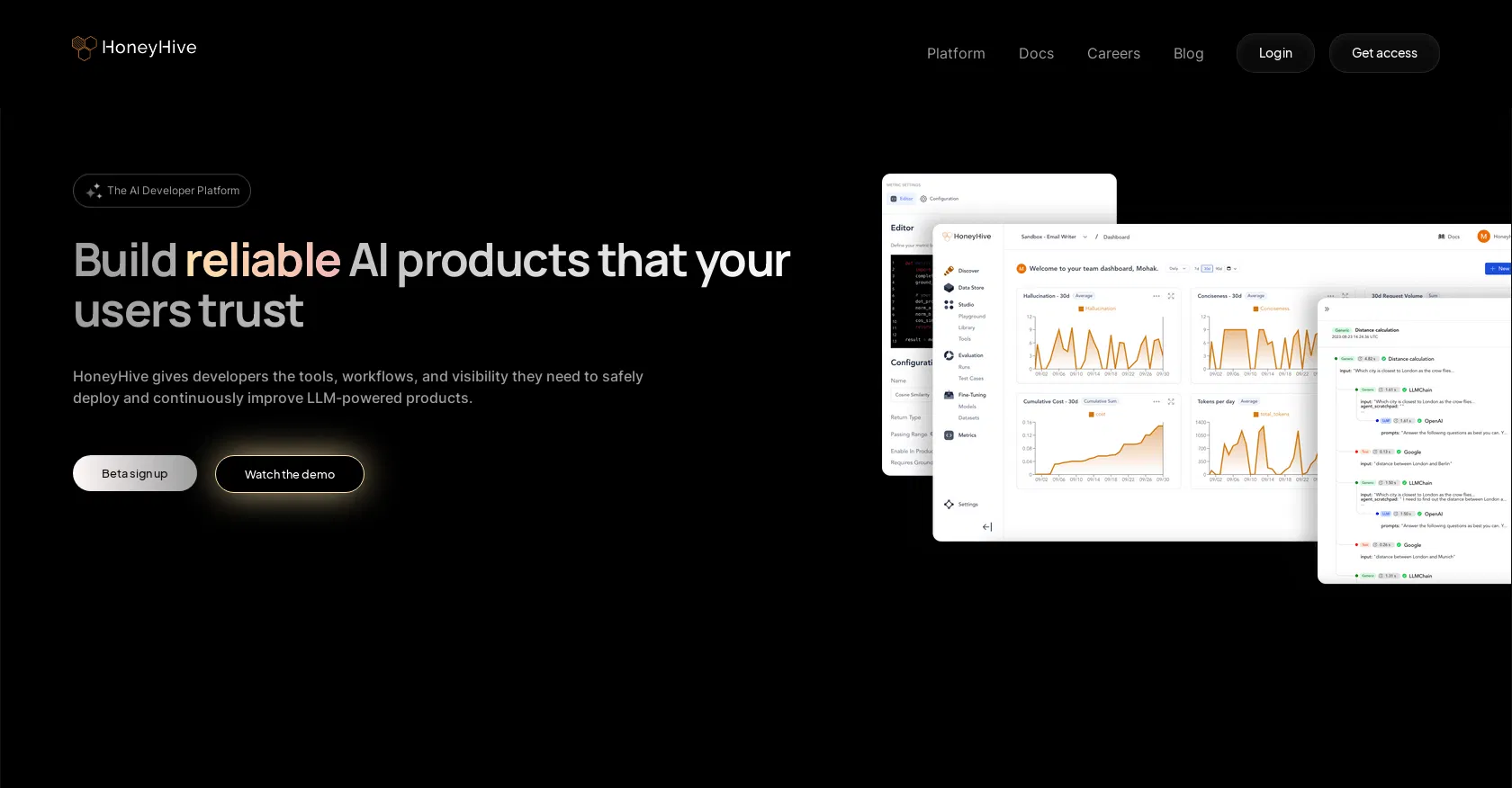

HoneyHive is a developer platform built for teams working with language models (LLMs). Its core value lies in providing a set of tools for safely deploying models into industrial production and continuously improving them. The platform enables developers and machine learning engineers to effectively manage the entire lifecycle of LLM applications, from prototyping to real-world monitoring, ensuring reliability and control.

Key features: the platform offers tools for testing and evaluating models (evaluation) on custom datasets, helping to compare the performance of different models and prompts. It includes a monitoring and tracing system for tracking requests, costs, latencies, and response quality in real time. HoneyHive also provides capabilities for collaborative work on prompts and model configurations, as well as tools for error tracking and analysis and for collecting user feedback for subsequent model refinement.

The platform is designed to be model- and framework-agnostic, working with any LLMs (e.g., from OpenAI, Anthropic, open models) and deployment environment. It offers a convenient web interface and API for integration into existing development pipelines. Technically, HoneyHive focuses on experiment reproducibility, detailed logging of all stages of a chain's operation, and providing analytics that help understand how a model behaves on real user data, not just on test sets.

Ideal for engineering and product teams implementing LLMs into their commercial applications or internal services. This includes startups developing AI products, as well as large companies requiring control and observability over model performance in production. Use cases: monitoring and optimizing chatbots, AI-based assistants, semantic search systems, and any applications where the stability, cost, and quality of generative AI responses are critical.