LangWatch

Optimizes language models to accelerate development and improve AI application quality.

Description

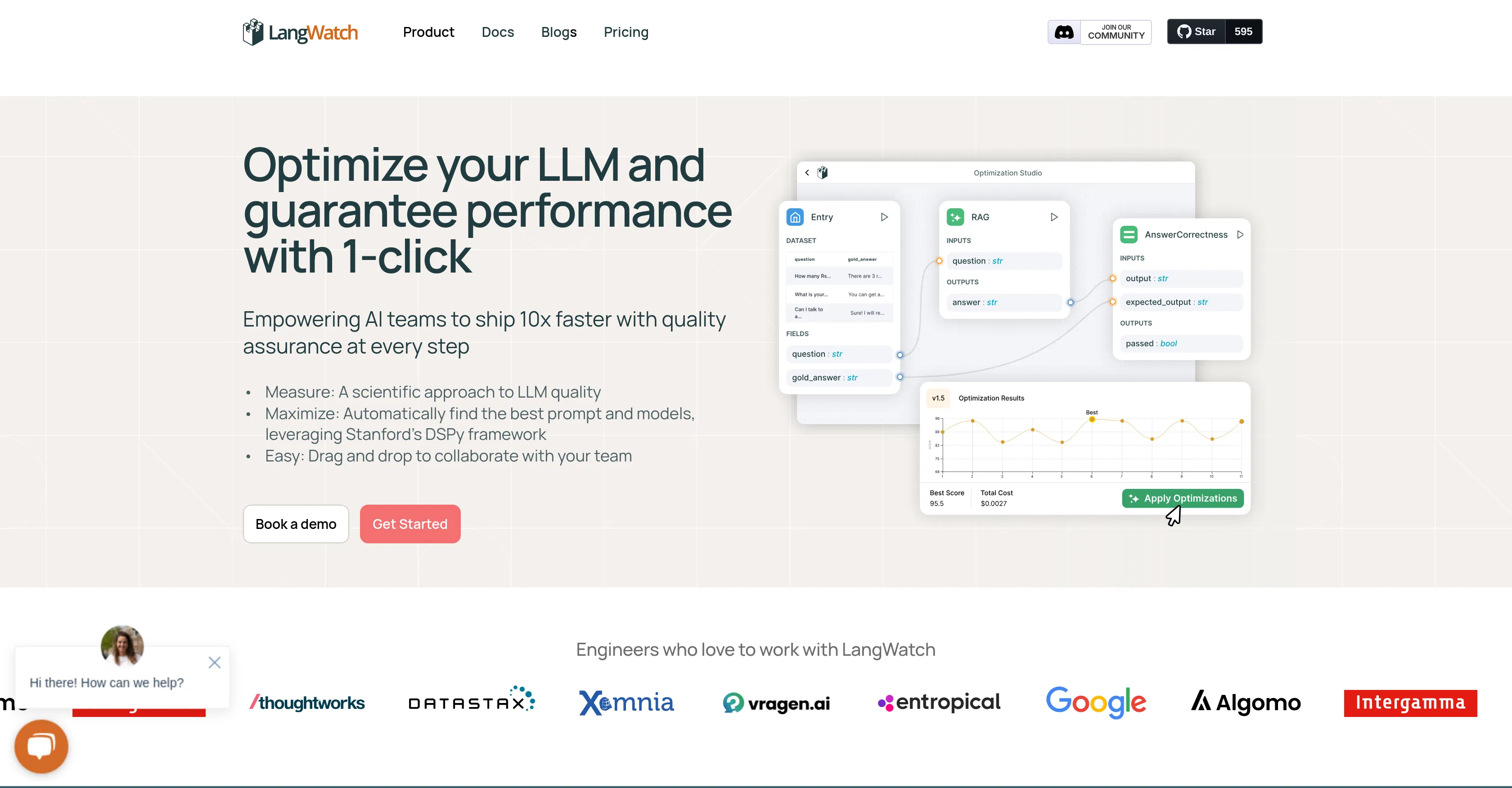

LangWatch is a platform for optimizing applications based on language models (LLMs), designed to assist AI teams. Its primary value lies in accelerating the development cycle and improving the quality of final products by automating key processes related to model tuning and evaluation. The platform enables developers and machine learning engineers to identify and resolve issues faster, leading to more stable and efficient AI solutions.

Key features: automatic search for optimal prompts and models using the Stanford DSPy framework, tools for comprehensive testing and quality assurance of generated content, real-time monitoring of LLM performance to track metrics and anomalies, and analytics with visualization of experiment results for informed decision-making. The platform provides a unified environment for managing the entire lifecycle of an LLM application.

A key differentiator of LangWatch is its deep integration with advanced research frameworks like DSPy, which automates complex engineering tasks that typically require manual effort. Technically, the platform offers an API for easy integration into existing pipelines, supports work with various cloud and local models, and provides dashboards for clear data presentation. This makes it a flexible solution that can be adapted to the needs of specific projects.

Ideal for development teams and machine learning engineers who build and maintain products based on large language models, such as chatbots, automated content systems, or analytical tools. The platform is particularly useful in scenarios where iteration speed, model output stability, and reduction of manual testing and parameter tuning routines are critical.

Key Features

Key Tags

Who It's For

Optimizing workflows

Generating ideas and experiments

No discussions yet.

Be the first to start a discussion!

Info

Best Prompts

No prompts yet. Be the first! LangWatch