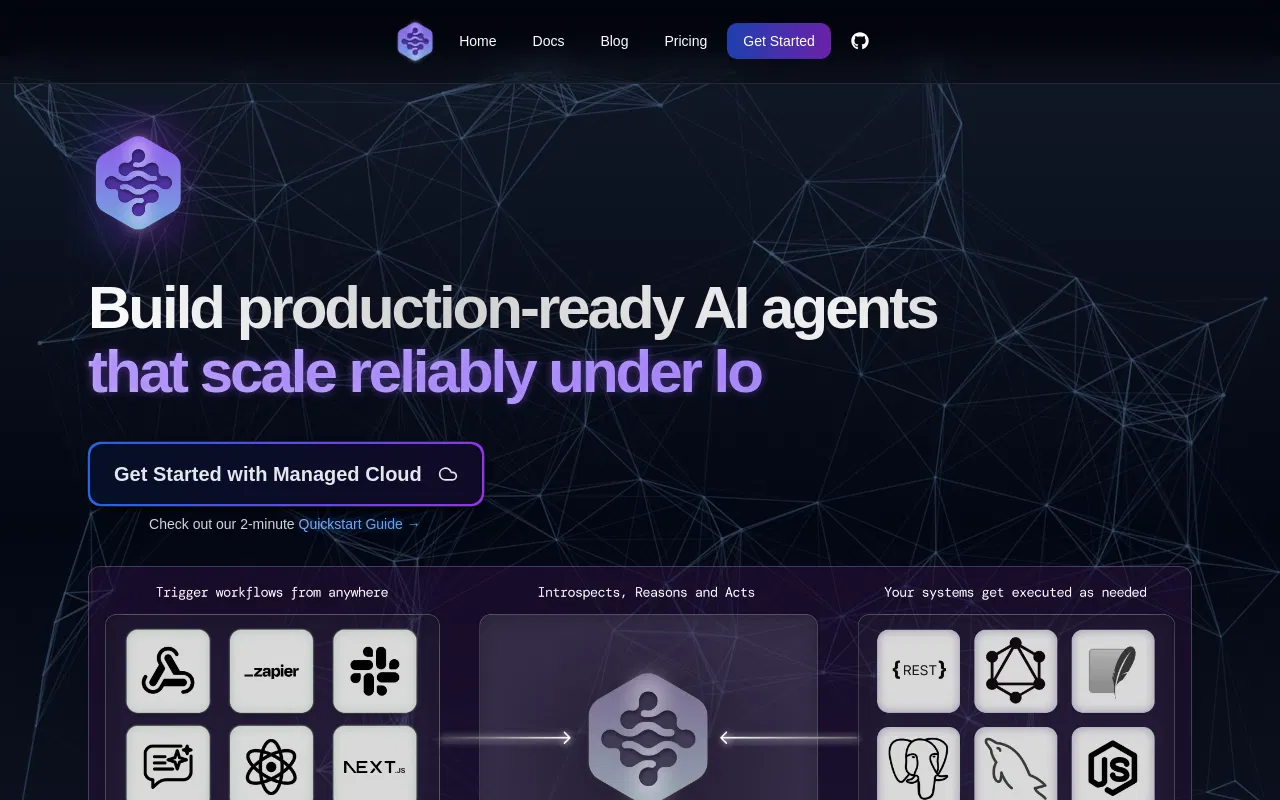

Langtrace AI

Langtrace AI streamlines Large Language Model (LLM) management, offering features like prompt engineering, LLM comparison, and performance monitoring to optimize LLM workflows.

Are you the owner?

Claim this tool to publish updates, news and respond to users.

Sign in to claim ownership

Sign InDescription

Langtrace AI is an observability and evaluation platform designed to streamline the development, deployment, and monitoring of applications powered by Large Language Models (LLMs). It provides developers and enterprises with comprehensive tools to gain visibility into LLM workflows, optimize performance, manage costs, and ensure reliability and security. The platform's core value lies in unifying disparate monitoring, testing, and debugging tasks into a single, integrated environment, thereby accelerating the LLM application lifecycle from prototyping to production.

Key features: The platform enables detailed prompt engineering and experimentation, allowing teams to version, test, and compare different prompts and model configurations. It offers automated evaluations to assess model outputs for accuracy, toxicity, or custom metrics. For monitoring, Langtrace provides real-time dashboards tracking token usage, latency, costs, and error rates across multiple LLM providers. Its security protocols include data privacy controls, support for on-premise deployment, and SOC2 Type II compliance, making it suitable for handling sensitive data. The platform also supports tracing AI agent workflows and integrates with popular frameworks.

What sets Langtrace apart is its deep, framework-agnostic integration and developer-centric approach. It offers SDKs for Python and TypeScript, enabling seamless integration into existing codebases for tracing and logging. It provides native support for ecosystems like LangChain and LlamaIndex, as well as vector databases, allowing for detailed inspection of retrieval-augmented generation (RAG) pipelines. Unlike basic logging tools, it offers trace-based evaluation, where entire execution chains can be captured, replayed, and scored, providing unparalleled insight into complex, multi-step AI agent interactions.

Ideal for AI engineering teams, ML platform engineers, and enterprises building production-grade LLM applications. Specific use cases include development teams needing to compare the performance and cost of models from OpenAI, Anthropic, or open-source providers; companies requiring stringent security, compliance, and data privacy for their AI deployments; and organizations implementing complex AI agents or RAG systems that require detailed observability to debug issues and improve accuracy. It is particularly valuable in regulated industries like finance, healthcare, and technology where audit trails and performance guarantees are critical.

Pricing follows a freemium model with a generous free tier for individual developers and small teams, while paid enterprise plans offer advanced features, higher usage limits, and dedicated support. The platform scales with the complexity and volume of LLM operations.