Thesys

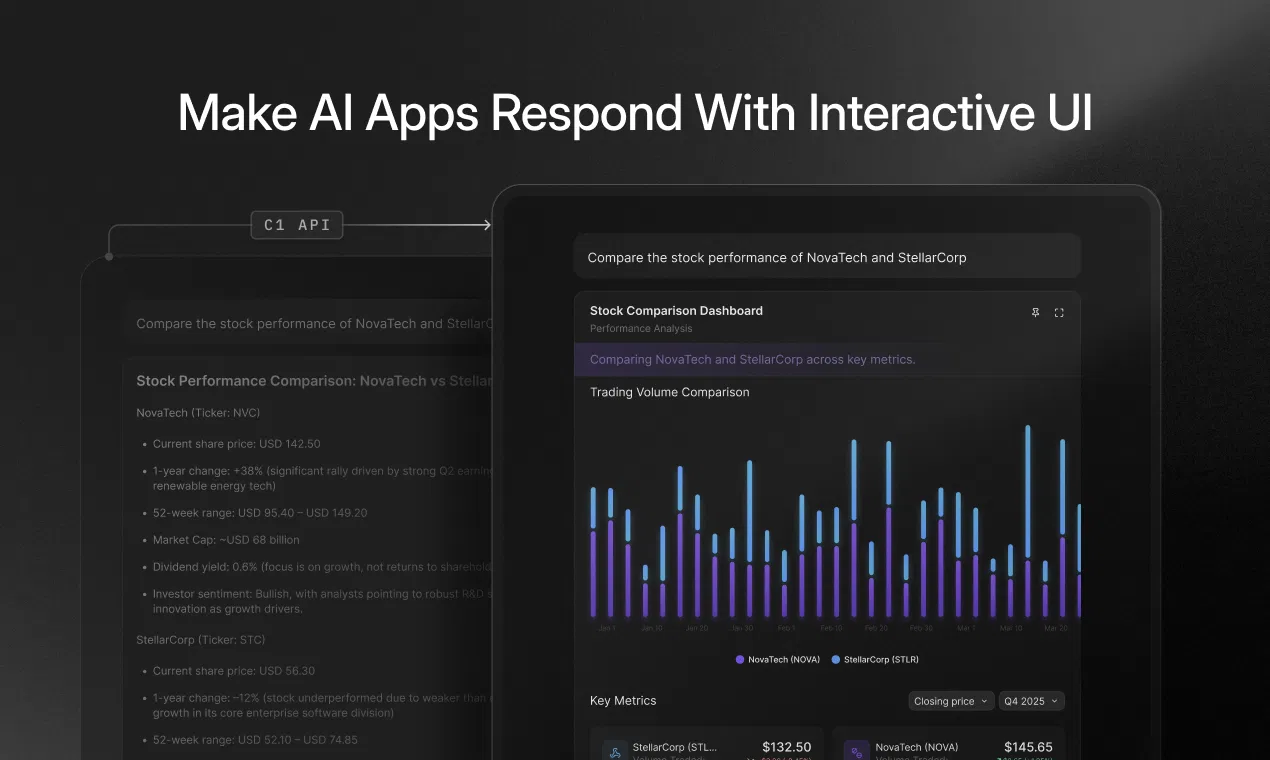

Augments LLMs to generate interactive UI components like charts and forms in real time with minimal code.

Are you the owner?

Claim this tool to publish updates, news and respond to users.

Sign in to claim ownership

Sign InDescription

Thesys C1 is a Generative UI API created by Thesys that enables large language models to produce dynamic, interactive user interfaces directly within their responses. Its core value lies in dramatically accelerating the development of AI-powered applications by allowing developers to bypass the traditional, labor-intensive frontend coding process. Instead of LLMs outputting only static text or code snippets, C1 empowers them to render fully functional UI elements like data visualizations, input forms, and informational cards instantly, making AI interactions far more rich and actionable.

Key features: The API can generate a variety of interactive components including real-time charts and graphs for data representation, customizable forms for user input collection, and styled cards for presenting structured information. It operates in real-time, ensuring UI elements are delivered as part of the LLM's immediate response stream. Integration is designed for extreme simplicity, requiring only two lines of code to connect to existing LLM workflows, frameworks, or Model Context Protocol (MCP) servers. This drastically reduces the UI development overhead typically associated with building AI applications.

What makes Thesys C1 unique is its focus on being a lightweight, model-agnostic layer that sits between the LLM and the end-user interface. It is not a standalone app builder but a specialized API that interprets structured data or instructions from the LLM to render corresponding UI widgets. Technically, it works seamlessly across any development platform that can make API calls and is designed to integrate with any LLM provider or custom model. This approach allows teams to maintain their existing AI stack while supercharging its output capabilities, enabling the creation of complex, multi-step interactive experiences without manual frontend intervention.

Ideal for developers and engineering teams building AI assistants, chatbots, data analysis tools, or any application where an LLM's response benefits from visual or interactive augmentation. Specific use cases include creating AI data analysts that can produce live charts from queries, building customer support bots that generate ticket forms on the fly, or developing educational tools where the AI tutor can render quizzes and progress trackers. It is particularly valuable for startups and product teams aiming to ship feature-rich, interactive AI applications at least ten times faster by eliminating approximately 80% of the typical UI development burden.