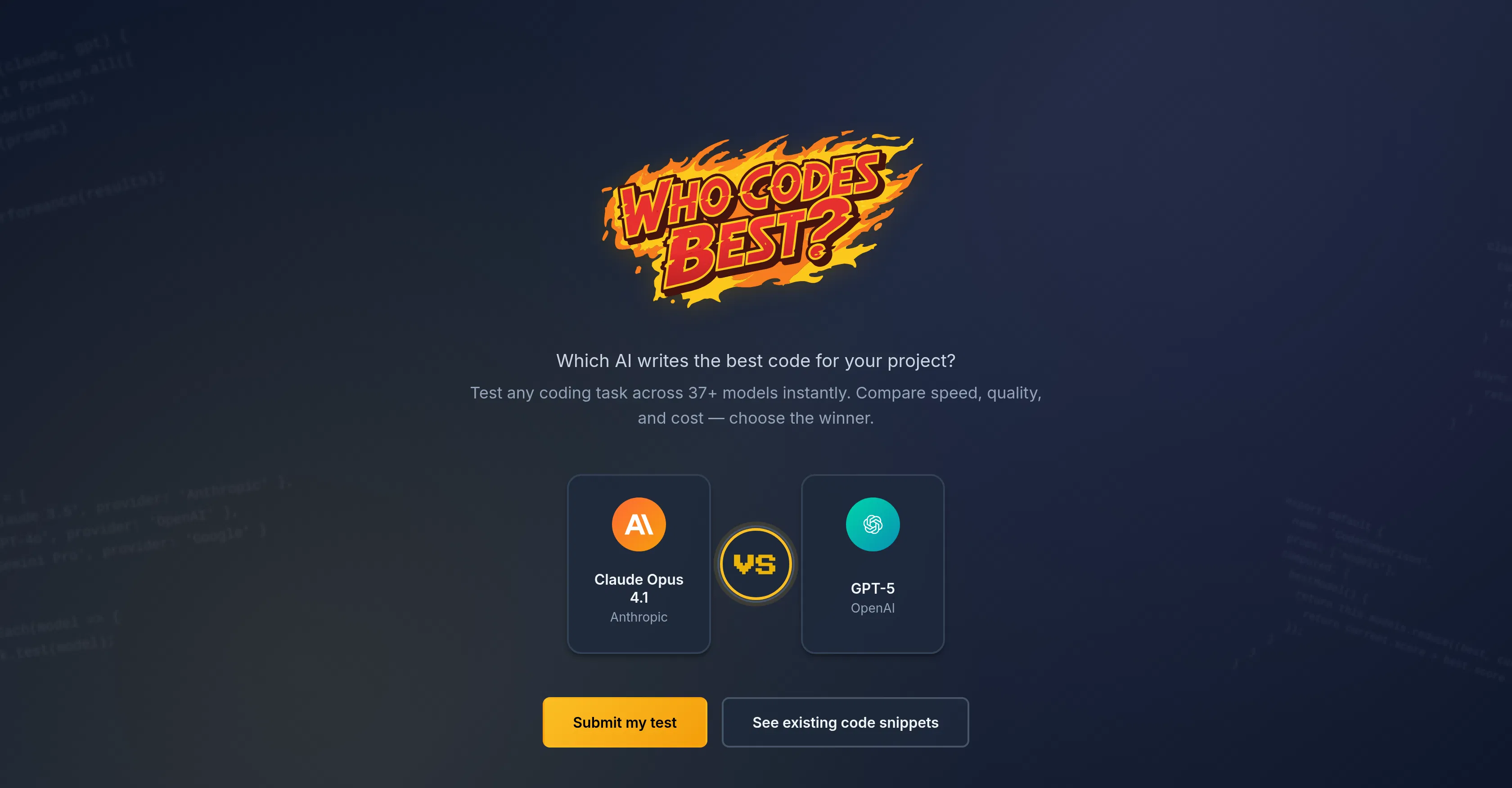

Who Codes Best?

Benchmarks and compares AI coding assistants by testing their code generation and review capabilities.

Are you the owner?

Claim this tool to publish updates, news and respond to users.

Sign in to claim ownership

Sign InDescription

Who Codes Best? is a specialized analytical platform created to provide developers and technical leaders with objective, data-driven insights into the performance of various AI coding assistants. It addresses the common challenge of choosing the right tool by conducting rigorous, standardized benchmarks that test core programming capabilities. The platform's primary value lies in transforming subjective opinions into measurable, comparable scores, helping users make informed decisions based on actual performance rather than marketing claims or anecdotal evidence.

Key features: The platform runs comprehensive benchmark tests that evaluate AI models on tasks like code generation from natural language prompts, bug detection and fixing in existing code snippets, and writing unit tests. It provides detailed scorecards for each model, breaking down performance by programming language and specific task type. Users can conduct head-to-head comparisons between any two featured assistants to see side-by-side results. The service also publishes analysis reports and articles that interpret the benchmark data, offering insights into trends and the evolving landscape of AI coding tools.

What makes it unique is its methodological focus on impartial, repeatable testing, simulating real-world developer scenarios rather than abstract academic problems. The platform is technically a web-based service with a clean, data-centric interface designed for quick comprehension of complex results. It regularly updates its benchmarks to include the latest models and versions from major providers. While it doesn't integrate directly into development environments, its findings are crucial for informing decisions about which coding assistant to integrate into IDEs like VS Code or JetBrains products.

Ideal for software developers, engineering managers, and CTOs who need to select and standardize an AI coding tool across their team. It is equally valuable for individual programmers curious about the strengths and weaknesses of tools like GitHub Copilot, Amazon CodeWhisperer, or Tabnine. Specific use cases include conducting a formal tool evaluation before a company-wide subscription, understanding which assistant performs best for a particular language like Python or JavaScript, and staying updated on the rapidly changing capabilities of new model releases to ensure a development team is using the most effective aid available.