LLMWise

Provides a unified API for accessing, comparing, and combining results from leading language models.

Are you the owner?

Claim this tool to publish updates, news and respond to users.

Sign in to claim ownership

Sign InDescription

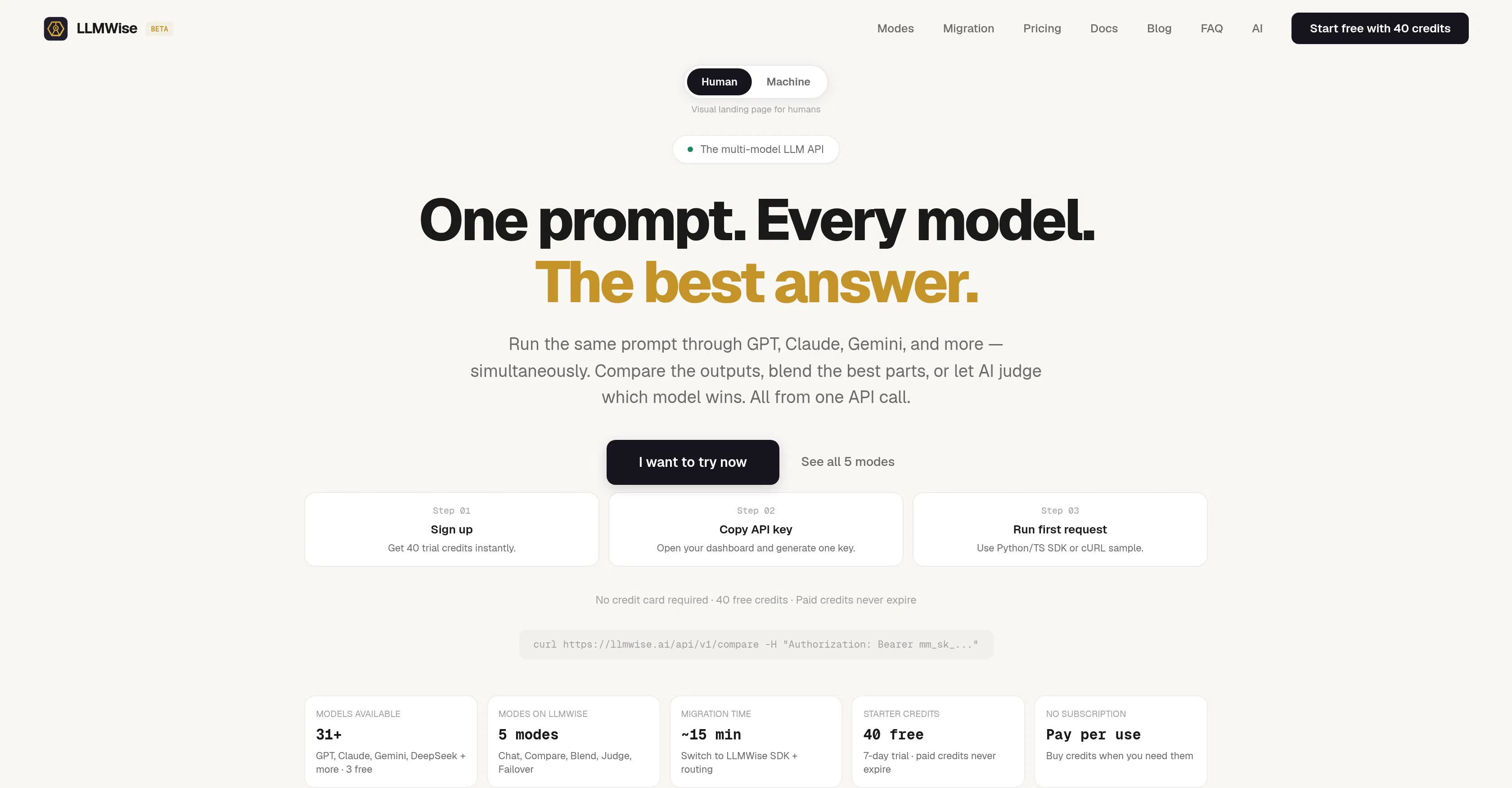

LLMWise is a multimodal API for working with large language models (LLMs), designed to consolidate access to diverse AI systems. Its primary value lies in eliminating the need to integrate with multiple individual providers, offering a single entry point for working with models such as GPT-5.2, Claude, Gemini, DeepSeek, Llama, and Grok. This allows developers and companies to significantly simplify their AI technology stack and focus on building applications rather than managing connections.

Key features: a single API key for working with dozens of models from different vendors; a function to compare responses from multiple models to the same query in parallel mode; "blending" technology that automatically combines the best fragments of responses from different AIs to create an optimal result; intelligent query routing to the most suitable model based on cost, speed, or required quality; built-in tools for testing and evaluating model performance; and centralized quota and expense management.

A key technical differentiator is the abstraction layer that standardizes interaction with different APIs, each with their own peculiarities. The platform offers detailed analytics on usage, including latency, cost, and request success rates. LLMWise easily integrates into existing projects via REST API and provides SDKs for popular programming languages, making it developer-friendly. The freemium model allows you to start with a free request limit.

Ideal for developers building AI applications who want to avoid vendor lock-in, as well as for researchers and analysts comparing the effectiveness of different language models. The service is useful for startups needing flexibility and resilience in choosing an AI provider, and for companies looking to optimize generative AI costs by automatically routing tasks to the most economical models. Use cases range from creating chatbots and assistants with enhanced reliability to mass content generation with subsequent automatic selection of the best options.